While the data we worked on here may not be ‘Big Data’ from a size or volume perspective, these techniques and methodologies are generic enough to scale for larger volumes of data. We took a hands-on approach to data wrangling, parsing, analysis and visualization at scale on a very common yet essential case-study on Log Analytics. set_option (‘max_rows’, def_mr ) Conclusion We can now reset the maximum rows displayed by pandas to the default value since we had changed it earlier to display a limited number of rows. Looks like total 404 errors occur the most in the afternoon and the least in the early morning. Total 404 errors per hour in a bar chart. describe() function returns the count, mean, stddev, min, and maxof a given column in this format: describe() on the content_size column of logs_df. In particular, we’d like to know the average, minimum, and maximum content sizes. Let’s compute some statistics regarding the size of content our web server returns.

Now that we have a DataFrame containing the parsed and cleaned log file as a data frame, we can perform some interesting exploratory data analysis (EDA) to try and get some interesting insights! Content size statistics Here in part two, we focus on analyzing that data. Then we wrangled our log data into a clean, structure, and meaningful format.

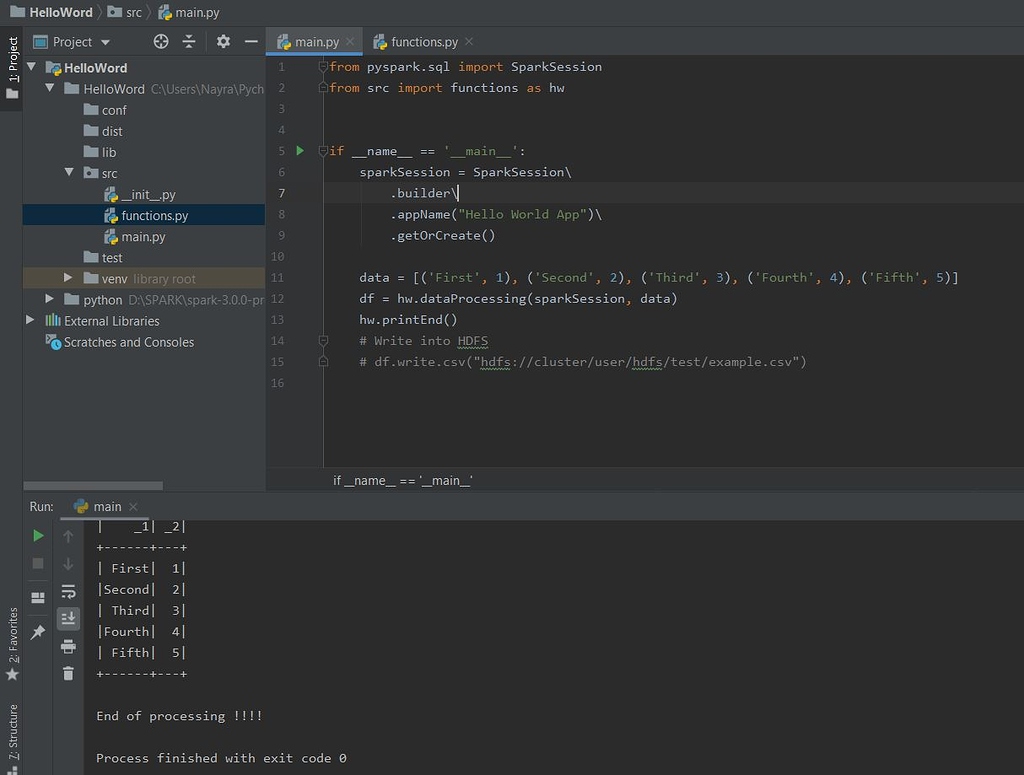

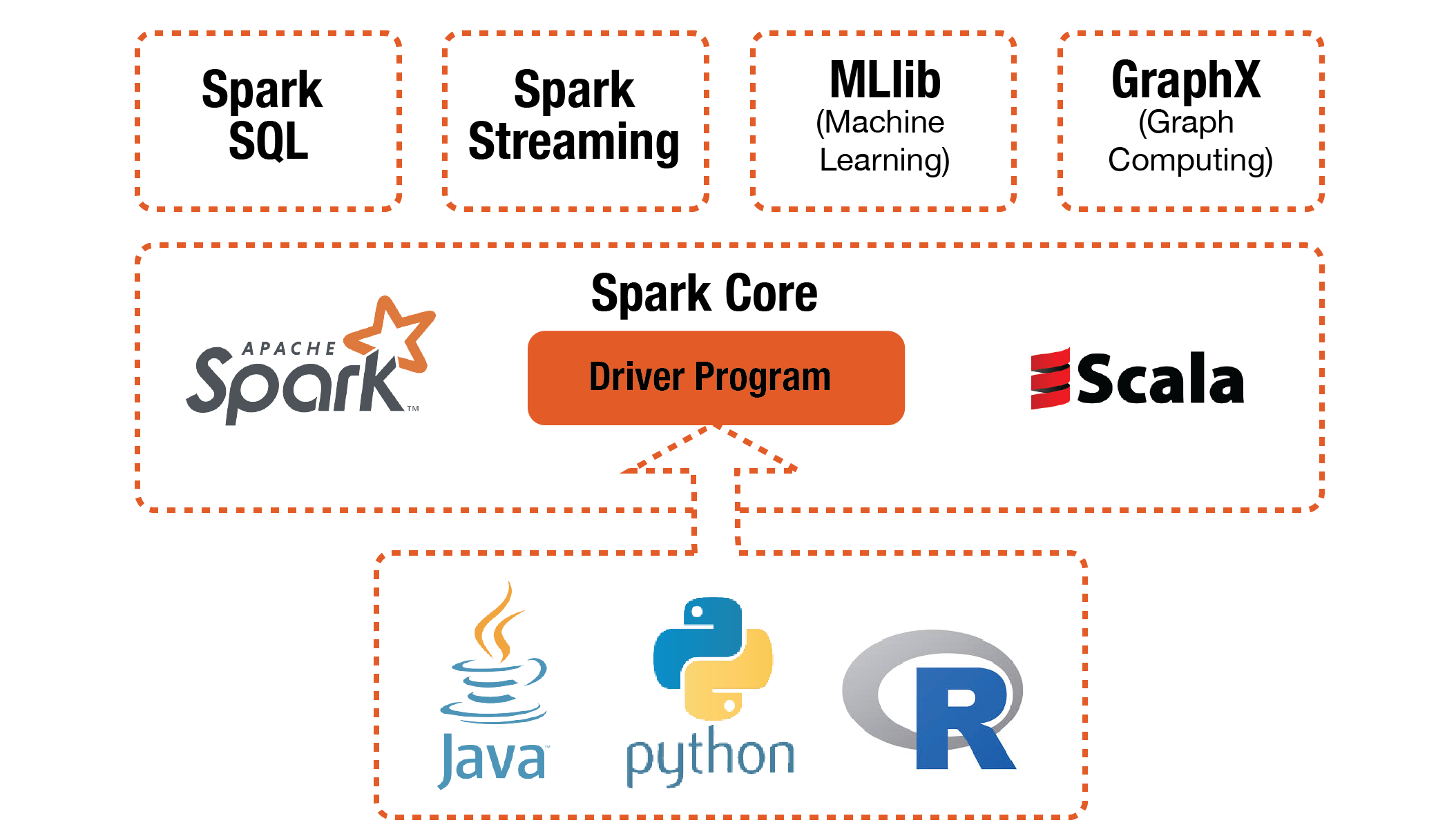

We set up environment variables, dependencies, loaded the necessary libraries for working with both DataFrames and regular expressions, and of course loaded the example log data. In part one of this series, we began by using Python and Apache Spark to process and wrangle our example web logs into a format fit for analysis, a vital technique considering the massive amount of log data generated by most organizations today.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed